Blog Post 3 - Finding the Ideal Spectral Radius

April 6, 2026

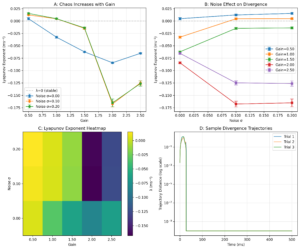

Coming into this week’s blog post, I really wanted to show of some fancy new results that looked promising. I spent all of last week coding a new and revised experiment 1, and here is what I got:

Yeah… it’s not looking great. Here’s the issue I have with my results. Graph A’s goal was to simply compare my FTLE against gain. Last week, I briefly mentioned what a negative FTLE meant: a chaotic attractor, where trajectories converged instead of diverged. This is what is happening here. I’m not getting chaotic behavior even though all mathematical signs point towards higher chaos with more gain. So, why is this?

This explains it:

Spectral radius: 7.065

Saturation fraction: 96.8%

Imagine my neural network as a large table with hundreds of cups. These cups can be filled or emptied by an outside source, and can even share water between them based off of my network weights. However, the two values I have shown above basically mean that I have taken a giant bucket and poured it all over the table with all the cups overflowing with water and getting everywhere. Spectral radius, the highest eigenvalue of my weight matrix, acts similarly to an FTLE: when it is < 1, signals and gradients diminish over time, when it = 1, signals are stable and memory is preserved, and when it is > 1, states grow exponentially and things become unstable. I generally want a spectral radius that is slightly greater than 1 for this project, but have it at 7 means that everything is blown way out of proportion.

This continues until each neuron gets saturated (think of this as all the cups are now full). No matter how chaotic I want my network to behave, there is no place for all the water to go: and thus, it converges (no movement or change). This is why I’m getting a negative Lyapunov exponent. Now, everyone’s favorite part: fixing my mistakes.

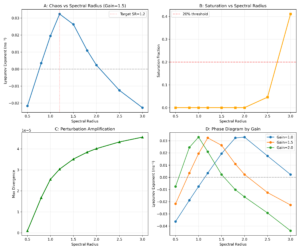

The first change I’m implementing is at the very root: the initialization of my matrix. In my initialization method, I implemented code that finds the maximum eigenvalue (spectral radius) and multiplies the matrix by the ratio between the current spectral radius and the target one (1.2). This should effectively help with my issues. Keyword should. I still need to test it. So, I created a small test function that varies the spectral radius. Here goes!

Now, this is EXACTLY what I’ve been waiting for. It’s honestly so satisfying looking at this. Graph A shows that my suspicions were right. There is an ideal spectral radius that results in the greatest Lyapunov exponent. Both too low and too high results in attraction. Graph B adds to this, showing that saturation certainly does occur at higher spectral radius values. Graph C shows the diminishing slope of divergence per spectral radii which makes sense as well. This is really really exciting for me, as this is the behavior that I have seen in past papers. For example, “Lyapunov spectra of chaotic recurrent neural networks” by Engelken et al posits that while coupling strength between neurons increases, mass saturation occurs, bending the Lyapunov spectrum downwards.

Graph D, however, really surprised me. It shows that there is a different ideal spectral radius for each value of gain. This sort of makes sense intuitively, however. For each greater spectral radii, saturation occurs faster, which means a lower gain is needed to balance it out. Thus, I have a difficult decision to make. When I vary gain in the later experiments, should I change the network itself just to ensure chaos? Or should I accept that neuron saturation is an element that comes with higher gain? I’ll have to consider this in biological aspects as well. That’s it for this week, however. It may seem like I didn’t make much progress, but I certainly have done a whole ton of work. Thanks for reading!

Leave a Reply

You must be logged in to post a comment.