Week 9 (4/21-4/25) - Closing the Loop: Implementing RAG and Executing the Pipeline

April 24, 2026

Welcome back to my blog! This week was a productive one, where I finally closed the loop on several major open questions from the past few weeks. I made a final decision on SHAP, implemented the full RAG pipeline, and structured all of the code for the project into a clean, working system.

Firstly, I continued my reading on interpretability, this time through Christoph Molnar’s Interpretability book [1]. One of the main takeaways was that there is actually no single mathematical definition of interpretability. Molnar frames it through two lenses: a cause-based definition (the degree to which a human can understand the cause of a decision) and a predictive one (the degree to which a human can consistently predict the model’s result). The need for interpretability is because a correct prediction alone does not fully solve the underlying task, extremely relevant to clinical decision support where understanding the reasoning is just as critical

He also discussed the various types of interpretability methods. He distinguishes between intrinsic methods (where interpretability is built into the model structure itself, like a decision tree) and post hoc methods (where interpretation is applied after training). He differentiates between the scope of interpretability as well, from understanding a single prediction all the way to comprehending the entire model’s behavior. He also touches on what makes explanations feel “human-friendly”, especially selected explanations focused on one or two key causes – something I kept in mind for my pipeline to eventually reflect.

One major question I had this week was whether SHAP could realistically be integrated into this pipeline. After careful consideration, I decided not to implement SHAP. The core issue is that SHAP requires a scalar output to attribute feature contributions to. Generative LLMs like BioMistral use tokens, not class probabilities, which means there is no clean scalar to attribute back to. On top of that, SHAP is computationally expensive, and running it on my local setup is not feasible. Finally, clinical features like lab values and vitals are often correlated with each other, which violates SHAP’s core independence assumption and would make the attributions unreliable anyway. The RAG component still serves the same purpose of interpretability in a much more natural way: by finding and citing the specific clinical guidelines that informed each recommendation, the model’s reasoning is transparent without needing a separate explanation layer.

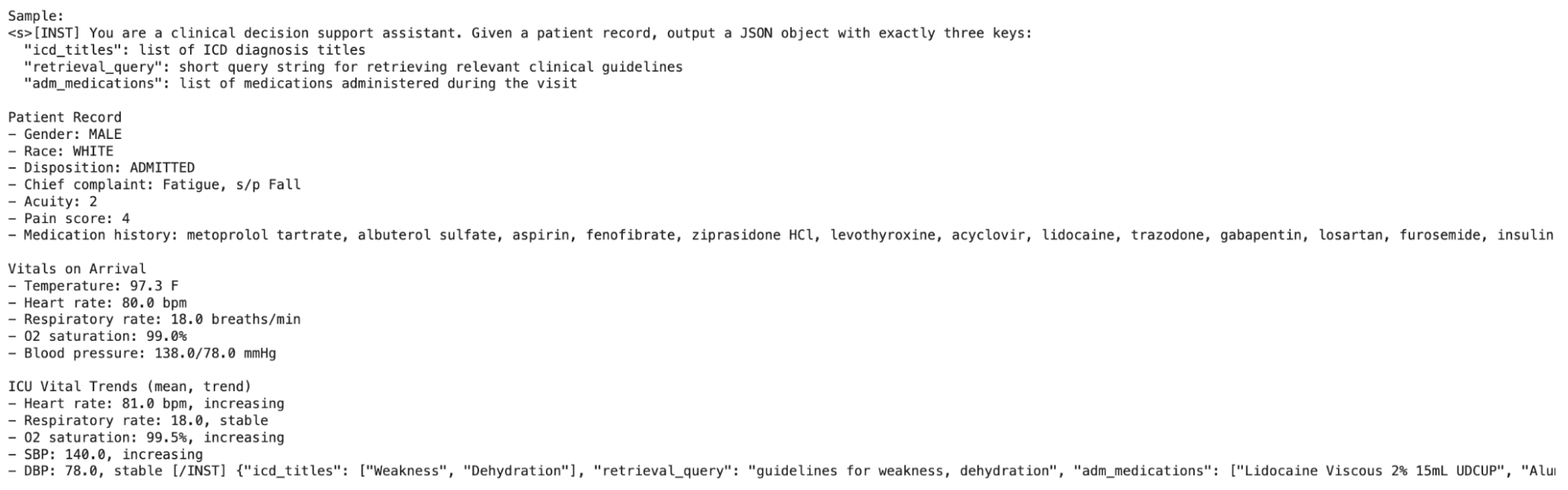

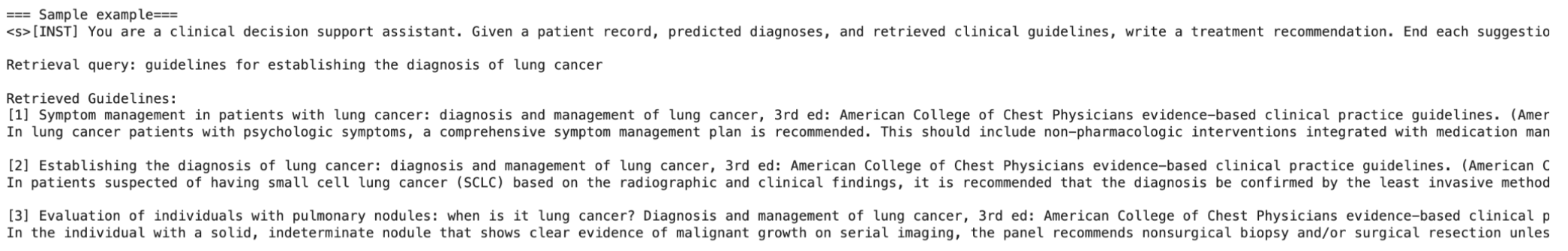

That brings me to the main goal of this week: implementing the full RAG flow. The final version has five steps: First, A patient note rendered from MIMIC features is inputted into BioMistral. Second, BioMistral outputs a structured JSON containing an ICD diagnosis guess with an explanation and a retrieval query, a short text string used to search CREST. Third, CREST topics are retrieved using that query via FAISS (Facebook AI Similarity Search), a library that indexes vectors and finds the nearest matches to a query vector extremely quickly. Fourth, BioMistral is re-prompted with the retrieved CREST recommendation and its strength. Finally, BioMistral generates the final treatment recommendation grounded in those CREST guidelines, with inline citations of the developer and recommendation strength.

I used S-PubMedBert-MS-MARCO as the embedding model, a biomedical-specific model that understands clinical language natively and so is much better suited for this matching than a general embedding. Retrieval is performed over the concatenation of each guideline’s title and text. The text helps overcome any vagueness in the title, while being truncated enough to stay within reasonable prompt lengths.

To keep everything organized and modular, I separated the overall pipeline of the project into four distinct notebooks.

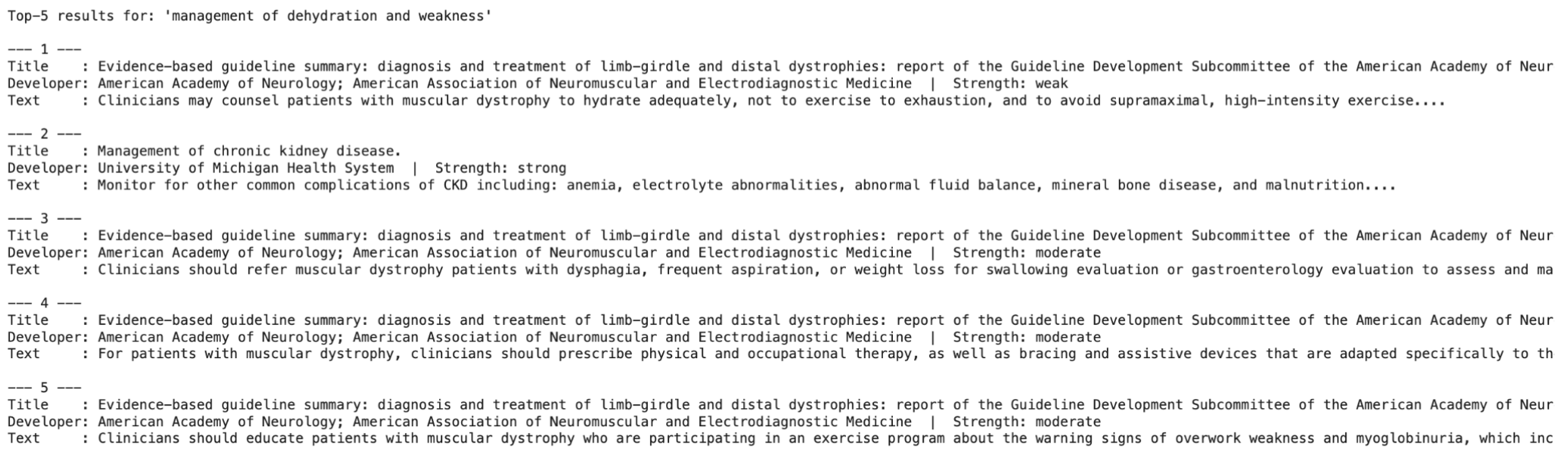

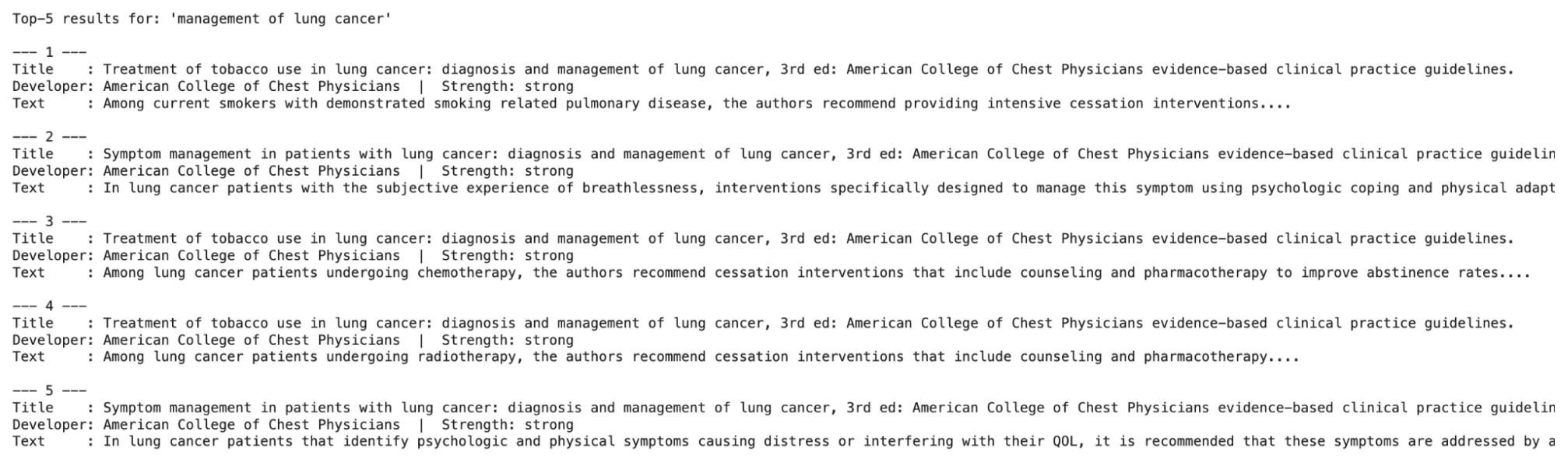

- Embedding with RAG – The first embeds every CREST guideline and then builds and saves the FAISS index. I tested this with a few sample queries, and the results were interesting: a vague prompt like “dehydration and weakness” returned fairly poor matches, while something more specific like “lung cancer” retrieved relevant, high-quality guidelines.

- Fine-tuning Stage 1 (MIMIC) – The second notebook fine-tunes BioMistral-7B using LoRA (a computationally lighter way to finetune models) to predict, from a patient record, the ICD diagnosis titles, medications administered during the visit, and the short retrieval query for CREST.

- Fine-tuning Stage 2 (CREST) – The third notebook adds a LoRA adapter trained on CREST guidelines to teach BioMistral the correct output format and habit for citation, specifically every recommendation must end with a developer and strength label. It is important to note that this adapter learns format and citation fidelity, not clinical correctness. It is trained on synthetic query and recommendation pairs derived purely from CREST rows, so no MIMIC patient labels are involved. Instead, two randomly chosen incorrect guidelines are included, and the model is trained to choose the correct one of the three.

- Putting it all together – The fourth notebook wires everything into a single predict() function that runs a patient row through all five steps end-to-end.

Since both fine-tuning stages need to run separately (and take a couple hours), the full results will be included in next week’s blog post once the entire pipeline is complete. With the CPT + fine-tuning, I am worried about having runtime issues (as I’ve seen others encounter), so I’d love to know what approaches you may have used to solve this! Mrs. Bhattacharya, my advisor, also suggested building the web app in parallel to display the input and output of the LLM. This week, I started planning that out. The app will take structured patient inputs (race, sex, chief complaint, pain scale, pre-existing conditions, medications, and vitals at both admission and during the stay) and output the diagnosis predictions sorted by recommendation strength.

Next week, I plan to evaluate the results of both fine-tuning stages once they complete, and build the web app using vibecoding. I’d love to hear from anyone who has worked on clinical LLM pipelines – specifically, how did you evaluate the quality of retrieval results, since it’s harder to decipher in a medical context? Stay tuned for next week!

Reader Interactions

Comments

Leave a Reply

You must be logged in to post a comment.

This is a really cool project! One thing I was wondering: you mentioned “dehydration and weakness” got worse matches than “lung cancer,” but one’s a symptom and the other’s basically already a diagnosis. Since BioMistral writes the retrieval query after its first diagnosis guess, isn’t there a risk the retrieved guidelines just back up whatever it guessed? What happens if that first guess is wrong? Curious how you’re thinking about cases where the first guess might be off.

Hi Vedh, thanks! While “dehydration and weakness” does sound like a symptom, it is an ICD diagnosis, and thus still counts. The retrieved guidelines do back up the predicted diagnosis (they are chosen this way). The first guess being wrong is one drawback in my project, but it is what I was able to do with the data I have available in both MIMIC and CREST. Hope that answers your question!

Hi Aanya, great progress this week! You’ve really done a lot since last week’s update. You must have put in a lot of time. My question is: are there any cases where the retrieved context is noisy or partially irrelevant, and if so, how do you handle them? Does it also significantly impact the model’s final output?