Week 3: Datasets and Directives

March 18, 2026

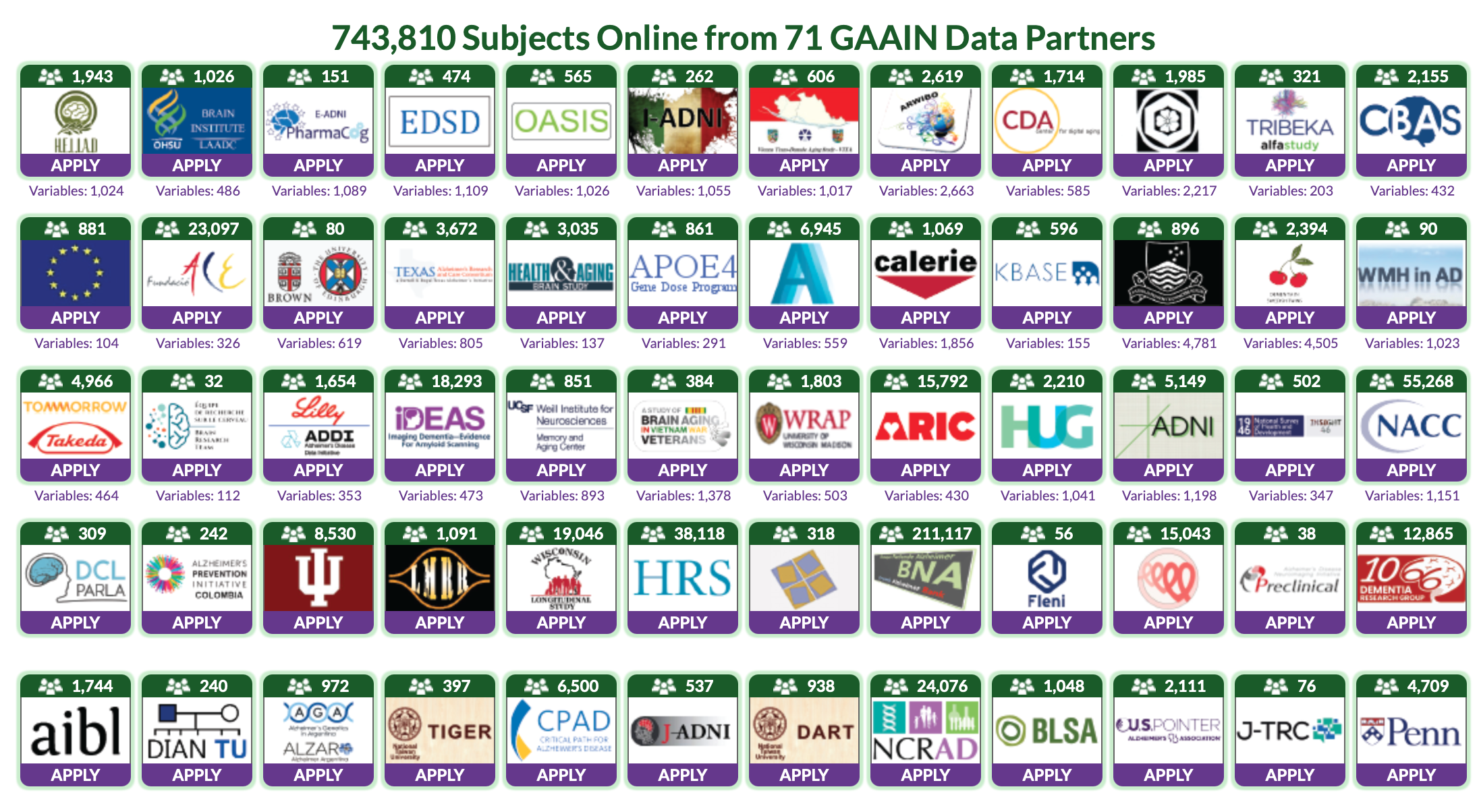

Hi all! This week, I compiled various datasets of tau-PET scans across different platforms/websites, and began requesting them with the help of my advisors. Specifically, the biggest find had to be a website that detailed close to 50 datasets and where to find them. This was a HUGE help, and I am extremely excited about this find. In the next couple weeks, I will begin requesting from all of them in order to use their data for my project. As for my Machine Learning course, I have completed the third and final week, and now am beginning to start setup of the ML model through applications like Google Colab, VSCode, and Jupyter Notebooks!

Now, where do these datasets fit in the grand scheme of things? With these datasets, I plan to use a 80/20 training-testing split, after generalization of course. Generalization here is probably the most important part of making sure the model works, as if it isn’t generalized, the model might interpret the data completely wrong(it might differentiate between different platforms used to make the brain scan rather than structures solely). In order to accomplish this, we must use tools like dcm2niix and NiBabel. They sound fancy, but in reality are actually simple, but powerful processes! Dcm2niix is a conversion tool, NiBabel is a python library for neuroimaging that has a few key formats(hence the reason for the Dcm2niix converter). Once these scans have been standardized, I will compute SUVR(Standardized Value Uptake Ratio) using the cerebellar reference region as well as the z score for the brain mask. ComBat Harmonization will also probably be used to remove any scanner/site effects. After this, we feed the standardized data into the model, and interpret its results through the 80/20 split. Overall, I am excited to begin this process, however I must still get approval to these datasets in the first place. Until next time!

Reader Interactions

Comments

Leave a Reply

You must be logged in to post a comment.

Exciting indeed! A good reminder for those following along on this blog as well is that there are a great deal of hoops one needs to go through to access these data sets to ensure that proper ethicality is being applied. This is just one of the aspects of doing research that slows the process, but which is absolutely necessary.

Hey Aditya, your project is very interesting, and it looks like you’re making good progress. One question I had was how you plan to consolidate the different datasets, especially if they may have different formats from different sources? I was just wondering what data cleaning/preprocessing you had planned.

Hi Aadrit,

Great question! I asked this myself actually when I was drawing up the methodology for my syllabus. The formats that we see on websites like Kaggle(JPEG/PNG) is vastly different than the ones we see from medical institutions(DICOM/NIfTL), however most have one thing in common. They are usually registered to the MNI152 standard brain(brain imaging template), or usually are able to be easily converted to this, allowing us to remove head size/shape differences and enable voxel-wise comparisons, needed for CNN generalization. However, there is still a difference present, which is in the file itself. This is where the converters I mentioned earlier come into play(dcm2niix and NiBabel). Dcm2niix allows me to convert any raw DICOM files into the desired NIfTL files, which will then be processed into the CNN using NiBabel. I hope this answered your question, feel free to let me know if you have any follow-ups!