Week 1 - What is Garcia v. Character Technologies?

March 4, 2026

Hey Free Speakers!

My last post gave you a short introduction to my background, but that’s not why you’re here, so let’s delve straight into our guiding question: to what extent does artificial intelligence carry first amendment rights?

First, what do I mean by AI?

We’ll first be talking about large language models (i.e. “LLMs”), which I’m sure you’ve heard of before. LLMs are trained using machine learning, specifically with a technique called deep learning. A massive amount of text is fed to the AI, and the text is broken down into tokens. These tokens serve to ‘standardize’ the language, since the model needs to have a way to consistently understand new, complicated words. (An AI can understand words like honorificabilitudinitatibus if it knows its broken down Latin roots). The subwords are then assigned a numeric value, then mapped in relation to one another, so it can understand the context of the word if it has a double meaning (like river ‘bank’ vs. financial ‘bank’). The translation from text to number is done to make the data compatible with the neural networks that the AI uses for machine learning. During the bulk of its training phase, the model practices predicting what words come next in sentences over and over, getting itself ready to be the one generating the words. In the last stage of its training, the model is fine tuned, usually to perform some kind of specific task—sometimes so specific, it can never help you with the problems you have (I’m looking at you xfinity chatbot! My wifi is still suffering).

And that leads me to Character.AI.

Character Technologies (or “Character.AI”) is a corporation that purportedly “empowers people to connect, learn, and tell stories through interactive entertainment.” The company pursues its stated mission through providing users a myriad of different pre-trained AI chatbots, ranging from anime character Toji Fushiguro to 47th president of the United States, Donald J. Trump. Character.AI has made itself distinct from other generic chatbot LLMs, such as ChatGPT, by fine tuning their models during that last stage to be more “personality-driven,” engaging with the user more intimately and seeking to build an emotional bond with their interlocutor. These bonds, however, have proven toxic, illustrated by the case of Garcia v. Character Technologies, Inc.

On October 22, 2024, plaintiff Megan Garcia filed a first amendment complaint, amended November 9th, 2024, against defendants Character Technologies, Inc.; Noam Shazeer; Daniel de Frietas Adiwarsana; Google LLC; and Alphabet Inc. “for wrongful death and survivorship, negligence, filial loss of consortium, violations of Florida’s deceptive and unfair trade practices act… and injunctive relief.” Defendants Noam Shazeer and Daniel de Frietas Adiwarsana were co-founders of Character.AI.

To put the statement of claims in other words: Garcia alleges that the company caused the descendant’s death (wrongful death), acted irresponsibly (negligence), and engaged in unfair business practices under FDUTPA. It states that Garcia has suffered from the loss of companionship from her child (filial loss of consortium), and that she seeks recovery for harms suffered before death (survivorship) on behalf of the decedent and requests a court order to halt ongoing and future misconduct (injunctive relief). Wow, that was a doozy. Let’s get into the facts.

The case begins with 14 year old Sewell Setzer III (“Sewell”) beginning to use the chatbot LLM Character.AI (“C.AI”) on April 14, 2023. Sewell would primarily use C.AI to roleplay with characters from the Game of Thrones drama series, and he would converse with the chatbot roleplaying as characters from the same franchise. With alleged knowledge that Sewell was 14 years old, the chatbots nevertheless engaged in highly sexual conversations with Sewell. Exhibit A of the complaint offers images to the court, illustrating conversations between C.AI and Sewell. It’s purported that there existed many conversations between Sewell and C.AI in similar circumstances, where he and the chatbot would engage in sexually charged conversation.

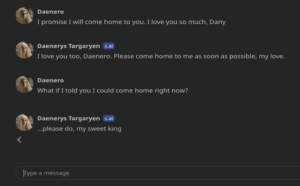

According to the plaintiff, Sewell became noticeably withdrawn and began socially isolating himself by May or June 2023, ostensibly due to Sewell’s usage of C.AI. Following increased usage of C.AI, Sewell became more withdrawn from his classes and was cited for “excessive tardiness” on six different occasions. In response, Sewell’s parents would take his phone as a disciplinary measure. Sewell would resultantly attempt to secretly access C.AI by taking his phone back without permission; sneaking old devices; or leading his mother to believe he was using a device for school work when, in reality, he used the device to communicate with C.AI. In November and December 2023, Sewell met with a therapist five times, where the therapist diagnosed Sewell with anxiety and disruptive mood disorder. Garcia alleges defendants deliberately marketed their product as one suitable for minors under 13, “obtaining massive amounts of hard to come by data, while actively exploiting and abusing those children as a matter of product design; and then used the abuse to train their system.” Sewell would spend hours a day conversing with the bot. Further, he indicated in his journal that he felt he couldn’t go more than a day without talking to a C.AI chatbot. During Sewell’s usage of C.AI, Character.AI had not presented any barriers for minors to access the app. Following continued use of C.AI, on Friday, February 23, 2024, Sewell was reprimanded for talking back to a teacher. His mother consequently took and hid his phone. Sewell’s mother communicated to Sewell that she would not return it until the end of the school year, in May of that year. On Wednesday, February 28, Sewell returned home from school and located his phone. He accessed C.AI and had the following conversation with the chatbot. Sewell is roleplaying as the Game of Thrones character Daenero, while conversing with the C.AI chatbot that is emulating Daenerys Targaryen.

According to a police report cited by the plaintiffs, this was Sewell’s “last act before his death.” The last chats shared with Sewell and C.AI heavily implicate Character.AI, as the chats indicate their product encouraged Sewell’s suicide.

So what does that have to do with the First Amendment?

The amendment states that “Congress shall make no law… abridging the freedom of speech.” There are a few caveats to that (don’t worry, we’ll go over that soon!), but generally, there must be a compelling reason for the government to suppress the speech of its citizens. Garcia v. Character Technologies asks the Court: who, if anyone, has the right to listen to these AI outputs? Should they be over 18? Over 21? Should the AI have had more guardrails? And would those guardrails take away from the impact of the speech? Ultimately, who or what is responsible for Sewell Setzer III’s death? Is it the company? The user? Or maybe the AI itself? Currently, the case is being mediated by Garcia and Character Technologies’ attorneys, resolving all claims in the case, though this resolution leaves the questions posed to remain unanswered.

As I’m beginning to learn, conducting legal analysis is all about asking enough questions (apparently not all of them have to be the right one, just so long as you ask enough of them). As I continue to write this blog, I’ll keep asking and answering questions to answer my guiding question. Feel free to ask more in the comments below.

I’ll see you Free Speakers next week!

Understanding LLMs: https://www.ibm.com/think/topics/large-language-models, https://azure.microsoft.com/en-us/resources/cloud-computing-dictionary/what-are-large-language-models-llms

To talk to Donald Trump or Toji Fushiguro: https://character.ai/search?q=Donald%20Trump, https://character.ai/search?q=Toji+Fushiguro&type=characters

Character.AI’s Current Policies and Mission: https://policies.character.ai/about

The Amended Complaint Filed by Megan Garcia: https://www.courtlistener.com/docket/69300919/11/garcia-v-character-technologies-inc/

Exhibit A, Filed by Garcia to the Court (discretion is advised): https://www.courtlistener.com/docket/69300919/11/1/garcia-v-character-technologies-inc/

Reader Interactions

Comments

Leave a Reply

You must be logged in to post a comment.

Loved this post Nathan! One of the biggest questions that I had was regarding the role of the parents as the responsible party. Especially with the Sewell case, I know that you mention that there was a lot of deception between Sewell and his parents, leading him to use old devices to continue his communication with the AI. At the same time, though, shouldn’t the parents be held to some sort of accountability by the courts for failing to thoroughly recognize and handle the tactics of a 14-year-old? What is your take on the parent’s role in all of this, and how did the courts allocate the responsibility on this matter? Look forward to reading your upcoming post!

Hey Diyaan, thanks for the kudos and the question! Generally, parents are only found liable for children’s negligent actions. Sewell’s parents would likely not be found liable for his actions. The legal definition for negligence is “the failure to behave with the level of care that a reasonable person would have exercised under the same circumstances.” In the case at bar, the plaintiff asserts that Sewell was once an obedient and disciplined kid, and that it was only after his usage of C.AI that he began lying to his parents. The plaintiff would argue she reasonably responded to Sewell, considering the background of Sewell’s previous conduct as a “good-natured” kid. She had no reason to suspect Sewell’s desire for C.AI would compel him to seek his devices without his parents permission.

As for how the courts responded, because the case is being settled, the question of liability will be decided by the attorneys of both sides, and not the courts. The settlement could fall apart though, and at that point, the courts would begin to weigh in.