Week 6: U-Net Experimentation

April 4, 2026

Hi everyone, welcome back to my blog! This week, let’s attempt to improve the results of the damage assessment U-Net, my worst-performing model (so far).

Motivation

After reading some of the comments I’ve received in previous weeks and consulting with my internal advisor, I realized that the U-Net not learning may be due to its architecture. I’ve noticed that by training the U-Net directly on the damage assessment dataset, it often picks gray patches of roads or roofs, and can’t tell the difference between roads and buildings. This is likely due to a lack of context, especially since damage in buildings are hard to detect, even visually. Honestly, not even I would have realized the T-shaped building on the right was destroyed if not for the mask—it just looks like a building with many things on the roof!

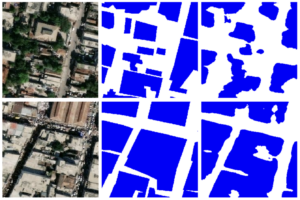

So the next step is to see if the U-Net can detect houses pre-disaster—a sanity check to make sure the U-Net is able to pick up the right patterns for houses at all, and possibly a way to help it detect damage better via transfer learning.

U-Net House Detection

After changing some settings of the Road detection U-Net and using the pre-disaster image-label-pairs of the Haiti Earthquake dataset, I was able to get it to separate the shape of the houses. It performed the task very well, actually, which made sense because buildings (in general) are easier to spot.

This is a good sign so far. Next, I wondered how to use the separated buildings to detect damage. I couldn’t crop the images and pass it to another model to classify the damage, because the building shapes are often not straight and hard to crop. Additionally, I wanted to stick to the U-Net to avoid extra complexity. Eventually, found online that one way to incorporate both pre-disaster and post-disaster images into damage assessment is a Siamese architecture. I decided to combine that with pre-training my U-Net on house detection,

Siamese U-Net

This model uses two encoders with equal weights that take in different images, one pre-disaster and one post-disaster, then concatenate the dense feature maps to one shared decoder for damage classification [2]. I’ve taken inspiration from the 1st place-winning Siamese U-Net of the xView2 challenge, and also made it two-stage [3]. I would first train the model on detecting houses with the pre-disaster images, then train it on the combined pre-disaster and post-disaster images for damage classifications. The idea of using a siamese architecture with two encoders that have shared weights is that they will learn the same features,

But does this actually live up to the hype?

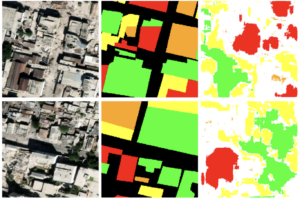

Here are the results.

By visual inspection, I think it’s improved from without the siamese architecture. The “blobs” are closer to where the houses are, and the destroyed classes are in the right relative location of the actual damage. Of course, it’s still not as good as SegFormer, and it’s still suffering from making blob shapes instead of recognizing houses. What do the numbers say?

The original pure U-Net damage detection achieved a mean IoU of 0.21 and a mean F1 score of 0.32. Meanwhile, the siamese achieves significantly better results of 0.34 for mean IoU and 0.50 for mean F1 score. It’s certainly better, which shows that the Siamese architecture is promising. If I had more time, I would compare my Siamese U-Net to the xView2 winning entry by Victor Durnov [4], and find ways to improve my model to replicate these results. Since I’m running pretty late on my project already, and I have a trip to China coming up, I think this might have to wait. In next week’s post, I’ll be gathering the metrics to compare the three models—U-Net, DeepLabv3+, and SegFormer, to see how they perform compared to each other.

Works Cited

[1] Lin, Szu-Yun, et al. “HaitiBRD: A Labeled Satellite Imagery Dataset for Building and Road Damage Assessment of the 2010 Haiti Earthquake.” Designsafe-Ci.org, DesignSafe-CI, Dec. 2023, https://doi.org/10.17603/ds2-fqat-4v02. Accessed 28 Mar. 2026.

[2] Chen, Tao, et al. “A Siamese Network Based U-Net for Change Detection in High Resolution Remote Sensing Images.” IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, vol. 15, 1 Jan. 2022, pp. 2357–2369, https://doi.org/10.1109/jstars.2022.3157648. Accessed 23 July 2023.

[3] Zheng, Zhuo, et al. “Building Damage Assessment for Rapid Disaster Response with a Deep Object-Based Semantic Change Detection Framework: From Natural Disasters to Man-Made Disasters.” Remote Sensing of Environment, vol. 265, Nov. 2021, p. 112636, https://doi.org/10.1016/j.rse.2021.112636.

[4] Durnov, Victor. “GitHub – Vdurnov/Xview2_1st_place_solution: 1st Place Solution for “XView2: Assess Building Damage” Challenge.” GitHub, 2020, https://github.com/vdurnov/xview2_1st_place_solution.

Reader Interactions

Comments

Leave a Reply

You must be logged in to post a comment.

Hi Yujie, I understand how training models for geospatial analysis can get tricky at times. The only two options I think you have is continue pushing with the same goal in mind, or try to reduce the scope of the project. For next week’s model, are you planning to include the Siamese or regular U-Net in the metric comparison?

Hi Yujie! It seems like you’ve made some progress to solve some of your challenges in the past couple of weeks. I really liked the idea of testing the U-Net for pre-disaster houses as a baseline. The fact that it was able to identify the pre-disaster houses is quite promising! I see that you’re going to be comparing the metrics of the three models next week. Have you been training the other two models alongside the U-Net as well, or will you be getting to those in the coming week?

Hi Yujie!

Seems like you’ve made a lot of progress in improving upon your model! How does the Siamese U-Net differ from U-Net? I understand that using Siamese U-Net did provide a better result for classification but I want to know how does it use pre-disaster and post-disaster images to classify damage. How does learning the same features better the classification?